How to choose the right research methods for your discovery process

Discovery is a vital part of product management. But equally important is choosing the correct methodology and researching to the right level of detail. Too much, and you risk getting stuck in analysis paralysis or losing the trust of stakeholders for moving too slowly. Too little, and you expose the company to financial or reputational risk.

It can also be challenging to get stakeholders on board with discovery and align them on what activities are part of the process.

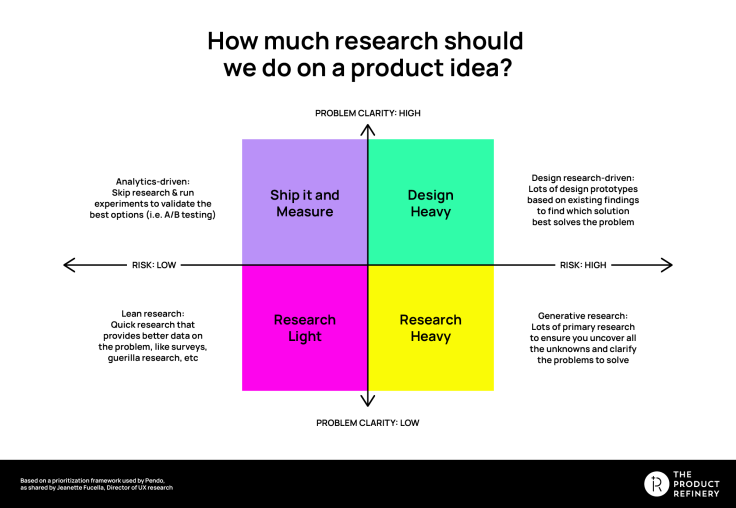

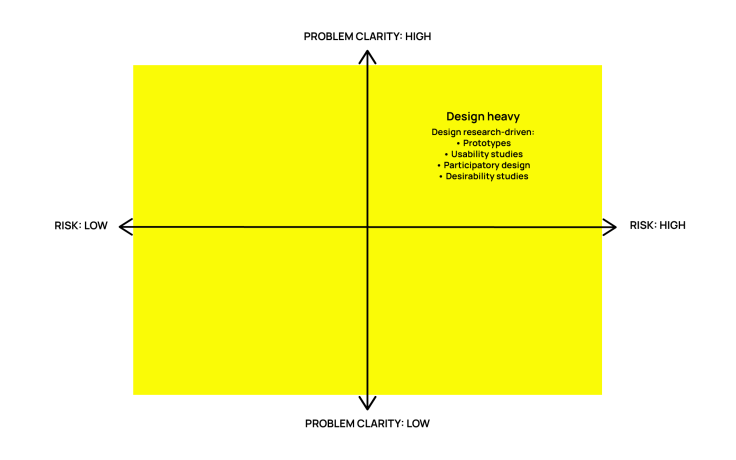

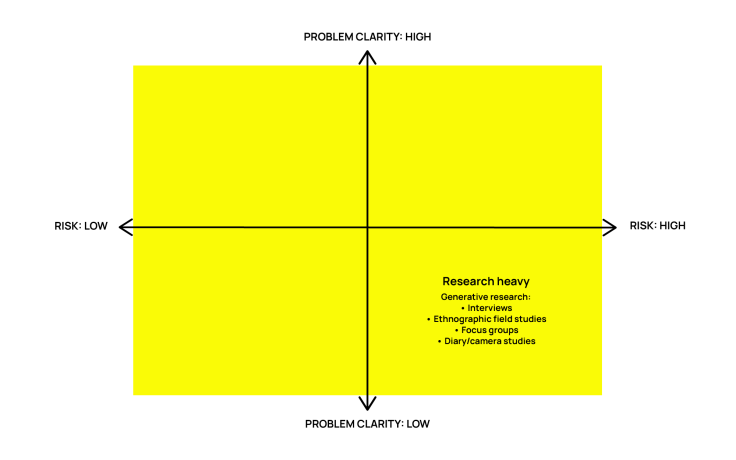

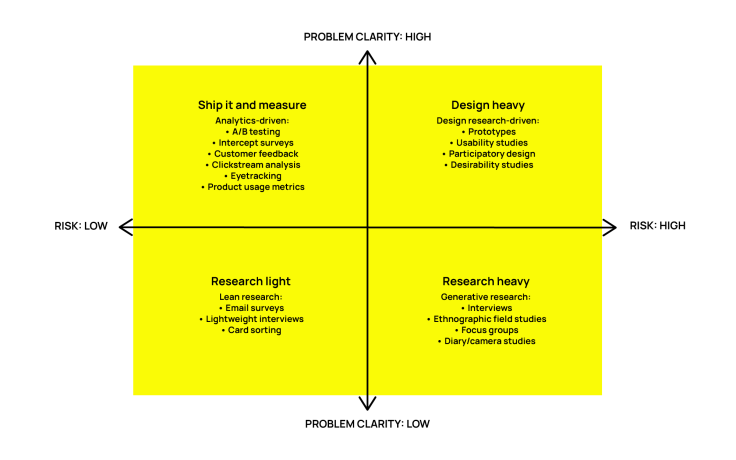

A few weeks ago, I came across this framework by Jeanette Fuccella. It does a great job of clarifying what kind of research is right for each situation.

As you’ll see, each quadrant has a description of the broad types of research that yield enough insight to build the right thing and mitigate the risk of getting it wrong without falling into the trap of analysis paralysis.

And it’s easy to understand, providing a simple way to explain to stakeholders what level of research you’re going to do in discovery and why.

People we’ve taught the framework to love it but generally wanted more detail on specific examples of types of research they could use in each quadrant, so here they are. In this article, we’ll explore how this framework can help you choose what types of research to use in discovery.

The axes: Problem clarity and risk

Let’s start by learning our way around the framework. It’s a simple two-by-two set out along two axes: problem clarity and risk.

The y-axis of the framework is problem clarity. High problem clarity means you understand the customer problem and have plenty of research to support that understanding. Low means you don’t understand the customer problem yet—or if there is a problem at all.

You could also think of this in terms of the problem and solution space. The lower half of the framework is the problem space—discovery to understand customer problems and which to solve. The upper half is the solution space—discovery to understand how you might solve a customer problem or how well a solution you’ve already come up with solves the customer problems.

The x-axis is risk—the risk of getting it wrong. It’s important to align on what risk means when using this framework. It can refer to financial risk and opportunity costs associated with building the wrong thing and the reputational cost of building something your customers dislike.

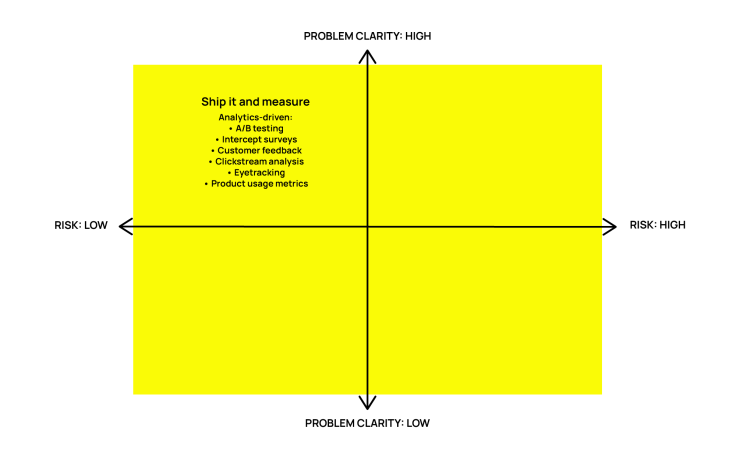

High problem clarity, low risk: Ship it and measure

When you are clear on the problem to solve for the customer, and there’s a relatively low risk of getting your solution wrong (for example, an incremental improvement to an existing feature), the most effective option is usually to ship it and measure how real users interact with it.

Before shipping, make sure you have success metrics clearly articulated and a means of actually collecting and analyzing the data.

Keep in mind that only using one of these methods may not give you the full picture, so you may want to consider using a combination of the following methods to gather your data.

A/B testing

Testing two variants of the product or feature side by side to observe the differences in user behavior.

Use A/B testing when choosing between alternative ideas. When you have a hypothesis that you can reduce to one variable, an A/B test is a great way to understand the impact of changing that variable. This might mean running a new version of a feature alongside the existing version or a completely new feature implemented in two slightly different ways.

Intercept surveys

A survey or a single question triggered at a particular point in the customer journey to gather data on whether what you’ve built is working.

Use intercept surveys to get immediate feedback and separate that sentiment from the entire product experience. Bear in mind the observer effect when you are using this method—by interrupting the process, you might bias responses somehow.

Customer feedback

Feedback channels for gathering customers’ broad opinions on the product (star ratings, NPS, customer service queries, etc.)

Use customer feedback when looking for unexpected negative impact. Use your existing customer feedback channels (star ratings, contact, etc.) to determine what they think of what you shipped and track trends in that data.

Clickstream analysis

Aggregated or individual data that shows what users click and in what order.

Use clickstream analysis to understand how something impacts the entire journey. Whether you’re looking at aggregated data or individual customer interactions (using Hotjar or similar), seeing how people actually use the feature in real life gives you a great deal of insight into how effectively you’ve addressed the customer problem and if there are ways to optimize the solution further.

Eyetracking

Tracking the eye line of users on the screen while they’re using a digital product.

Use eye tracking to find out what captures people’s attention or where they’re getting stuck. We don’t all have eye-tracking technology available, but if your UX team uses this method, it can tell you what people find important (or struggle to locate) in your solution. For those of us without access to eye-tracking tech, recording mouse movement on each page can yield similar information with a lower barrier to entry.

Product usage metrics

Metrics that show which users are using the product, what they do with it, and how often.

Use product usage metrics to decide if what you built drives the right outcomes. Before you ship what you’ve built, you’ll undoubtedly have many success metrics that will tell you if it worked. General usage metrics can be a good indicator of how valuable your product is to the customers (i.e., if they use it, it’s likely of value).

High problem clarity, high risk: Design heavy

When the problem you’re solving for the customer is clear, but there’s more risk if you get it wrong, it makes sense to use research methods that involve designing and testing your solution before building it.

It’s more time-consuming to work this way but worth it to mitigate risk.

Some of the research types you could use if you’re working in this quadrant are outlined below.

Prototypes

A working low-fidelity version of the product you intend to build that you can test with users. The level of risk and clarity will likely dictate the level of fidelity in your prototype. The following are common varieties of prototype:

Paper prototype. A paper representation of your solution that you can discuss and test with users.

Clickable mock-up. Using Figma or similar to link designs so that people can click through to different pages as they would on a real site.

Mechanical Turk. Build the user interface, then do the ‘automated’ background steps by hand.

No/low-code working prototype. Use simple no/low-code tools such as bubble, Airtable, and Webflow to make a working prototype of the part of your solution that you want to test.

Use prototypes when you want to test an idea that is too difficult to explain to customers without them trying it. A classic example of this is the iPod—it would have been challenging to understand whether people could use it without making a prototype. The same is true for most novel interactions. If your research needs users to “imagine” what it would be like to use something, you should probably make a prototype of it.

NOTE: Because this framework is about prioritizing user research, we’ve talked about prototypes from that perspective, but prototyping is also an excellent way to ideate possible solutions or explore technical feasibility of different solutions.

User experience studies

Observing and analyzing how users interact with your product or its prototype.

Conduct user experience studies when you want to understand how people interact with your product or prototypes. Depending on your challenge and the available resources, this might range from simply observing a user following a test script when interacting with your product (or prototype) to fully benchmarked tests comparing with competitors and usability or accessibility standards.

Participatory design (co-design)

Designing a solution in collaboration with users, for example, having them suggest what goes where, which elements of a design work with them, and what would work better.

Use participatory design when your users know the problem better than you. For example, architects and town planners hold design consultations with local residents to flush out the elements of their designs that might go wrong. Or, in digital products, having users card sort or write their own titles or instructions on pages gives you an insight into their understanding of the problem that’s difficult to elicit with other research methods.

Desirability studies

Testing the desirability of your solution by showing it to customers and seeing whether they like it.

Use desirability studies to gather opinions or test perceived value without building the solution. It’s most effective to test desirability by having customers commit to buying one of the options presented. You can do this with a “fake door”—a landing page where people sign up for a waiting list to buy the product before it’s actually built.

Low problem clarity, low risk: Research light

When you have low problem clarity, you need to focus your discovery efforts on the problem space. However, when there is little risk attached to what you are building, it makes sense to keep the research to a minimum before exploring solutions.

Key questions to answer at this time are “Does this problem exist?” and “Is this problem worth solving?”

When you’re working in this space, the following research methods should give you enough detail in a short length of time:

Email surveys

A survey, usually with multiple-choice or scale-based questions sent out to users or potential users by email.

Use email surveys to learn about users’ behaviors and attitudes, and when you know the problem well enough to write good questions. If you struggle to write questions that users can easily answer with multiple choice or a scale, you should probably do some more interviews first.

Lightweight interviews

Short interviews with a few broad, open questions.

Use lightweight interviews when you need to understand the problem quickly. These are similar to guerrilla research—like catching customers outside a shop for quick questions about a product or brand. While not strictly a rule of thumb to follow, you’ll likely start to notice patterns that help you understand the problem once you’ve interviewed five to eight people, so there is no need for extensive recruitment.

Card sorting

Having users rank, order, or group cards with elements of your problem or solution written on them.

Use card sorting to understand how users’ mental models of a problem or solution are structured. For example, you could use cards with topics on them and have users group them into categories that make sense to them, then use that to structure the navigation of your product.

Low problem clarity, high risk: Research heavy

This is the area that will likely involve the highest level of effort. When you’re working in the problem space, don’t understand the customer problem yet, and a lot is riding on what you build, you need to start at the beginning and research in plenty of detail.

This means using classic discovery methods to unpack the problem in its broadest sense and then continuing to research with increasing focus to hone in on the area of the problem you want to solve for the customer.

The following research methods will likely be most useful when working on a challenge of this type:

Interviews

One-on-one discussions in which potential customers answer a prepared question set and the interviewer has time and flexibility to explore interesting or unexpected answers in more detail.

Use interviews to gather rich information about the problem space. The level of structure in your interviews depends on how well you already understand the problem space. When you have little understanding of the problem, broader exploratory questions about the situations in which customers are likely to be looking for a solution are most helpful. The interviews tend to become more structured and scripted as you understand the problem better. While not necessarily quick when compared with research-light methodologies, interviews yield more immediate results than comprehensive ethnographic research.

However you conduct your interviews, make sure to keep both a summary and transcript or recording of the interview. Often small comments that might not be relevant to the current problem you are solving will be useful in the future as your understanding of the problem space continues to develop.

Focus groups

Facilitated group discussions about a product or user problem. Usually recorded or observed by the researchers and facilitated by someone else.

Use focus groups to understand the general consensus on a topic and how people present their feelings about it when talking to peers. Focus groups are a useful way of gathering attitudinal research in a relatively short space of time. They are also less prone to participants giving answers that “please the interviewer” than one-on-one interviews—especially when facilitated by someone not directly connected to the product and observed on camera or through one-way glass.

Remember that focus groups tend to yield the “general opinion” on matters rather than people’s individual detailed views. So if you want to go into more detail or talk about potentially sensitive issues, interviews might be a more appropriate tool.

Diary / camera studies

Participants write or record a diary based on prompts written by the researcher.

Diary or camera studies are perfect for understanding how your product might fit within the user’s world. To make sure you get the most out of diary studies, make the prompts as specific and easy to understand as you can, and make the information capture methods as easy as possible for participants. For example, if they are too busy to write things up, let them send you voice messages for their diary entries.

Ethnographic field studies

A mixture of research methods mentioned above with a focus on studying participants in the environment where they would naturally encounter the problem you’re looking to solve. For example, shadowing participants for a few days to understand how they live or work, unpacking areas of interest with more detailed interviews or studies, or simply going out and observing how customers buy or use your products.

Use ethnographic field studies to understand the environment and context in which someone might use your product. Because of its academic-sounding name, people often assume ethnographic research needs to be done by a dedicated researcher. While that might be true for large-scale studies, basic field study techniques are simple enough for most product folks. In fact, you likely do it already to a certain extent when talking to customers... (You do talk to customers, don’t you?)

In summary

Choosing the right level of depth and research types for your discovery process is a challenge, but thinking of it in terms of risk and problem clarity should help you make a more effective decision on how to approach it.

Using the framework in this article should help you hit the “Goldilocks” sweet spot of the right amount of discovery to move ahead with confidence and learn what you need to deliver value to your customers. It’s really easy to get started, so why not try using it with some of the challenges you’re working on and see what discovery methods are most appropriate.

Written by Robin Zaragoza, Founder and CEO, The Product Refinery. Founder and CEO at The Product Refinery, Robin has been working in tech for 20 years and delivering product for the last 15 of those at companies of various sizes, from early-stage start-ups where she was the first product manager, to large publicly traded companies where she led teams of product managers.

Robin founded The Product Refinery, a product coaching company, to help product people further their skills and empower product teams to do their best work.

Get started for free

Log in or sign up

Get started for free

or

By clicking “Continue with Google / Email” you agree to our User Terms of Service and Privacy Policy