Collaborative analysis: A better way of involving stakeholders in research

At Rosenfeld Media’s Advancing Research 2020, Dovetail’s UX Designer, Lucy Denton presented a talk called ‘Collaborative analysis – a better way of involving stakeholders in research’. Lucy shared the methods that she’s used in her previous role at Atlassian for analyzing and synthesizing research data as a team. Lucy worked there for just over five years as a UX designer and has recently joined the Dovetail team.

Involving stakeholders in research

As I’m sure others can empathize with, UX resources at Atlassian were usually thinly spread. This meant that for research projects I would often conduct them alone. I would create the research plan, any stimulus needed, such as discussion guides and prototypes, I would conduct the interviews or testing sessions, analyze the data, and then finally I would present my findings back to my stakeholders at the end. A few years ago at Atlassian, and I feel in the industry in general, there was a push to include stakeholders in the research process as an opportunity for them to get exposure to customer pain and build firsthand empathy for the user. There’s a lot of resources about the benefits of including stakeholders in research so I won’t go into it too much here – at a high level having stakeholders involved in the process can give your findings more credibility, and helps you to get buy-in on recommendations later down the line. However, in my experience involving stakeholders in the research process in practice meant inviting product managers and developers to be silent observers in interview sessions. I have found this to be a fairly ineffective and unhelpful way of including them.

It’s not scalable for the whole team

You can only really have one or two observers along with the interviewer in the session before it starts to feel overcrowded and potentially uncomfortable for the participant. I have tried various ways to mitigate this including having observers dial into a session with their cameras and audio turned off. However, I of course always introduce them at the beginning of the call (I would never have secret observers), so the participant always knew they were there. I feel that participants are less likely to be open about their struggles or their true feelings towards something when they know that there’s a crowd listening in. For the research projects I was conducting, I would typically do six to eight interviews or usability tests per round. At the time I had 8 team members, plus a product manager and development lead – so 10 people altogether. That meant that my stakeholders could join one, or maybe two sessions per round.

Stakeholders develop premature conclusions based on a partial sample size

Listening to one or two interviews at the beginning of the project and then coming back later for the research findings. Playback is not helpful for the researcher or the observer. For one thing, people are likely to draw premature conclusions based on the sessions that they did attend at the beginning – which is a partial sample size. Those conclusions may not reflect the actual research findings, and because there is potentially a longer period between when they attended the sessions and when they hear the overall findings, I’ve found it hard to change their perspective. Even so, people are more likely to remember and add weight to things they heard in person as opposed to something they read in a report or saw on a PowerPoint slide.

Stakeholders don’t engage with playback reports and presentations

I find it hard to get stakeholders to engage in research findings presentations – we called these ‘research playbacks’ in my team. I found it especially hard to get stakeholders to engage in and action research when they were involved in a couple of interviews or testing sessions a week or two prior. In my team some people had this attitude that they feel they were involved in the research and they experience it firsthand, so they believe that they know what the findings are. Again that’s based on a partial sample size, which can misrepresent the research and make it difficult for researchers and designers to move forward. It is also just generally hard to digest and remember findings that are presented in a report or a presentation format.

Collaborative analysis

For researchers and others in the UX field, it is the deep analysis process of multiple sessions and synthesis of that data that allows them to build up a holistic understanding of the problems and users, which is really what we’re trying to achieve when we include our stakeholders and team members in the process. In my experience, the analysis and synthesis part of the process is almost always done by one person alone – sometimes two people but they were always two people within the UX team. It rarely included people from the broader team.

I’ve been experimenting with scaling this part of the process to include others on the team, particularly those who are not researchers and designers. This is what I would like to share with you today.

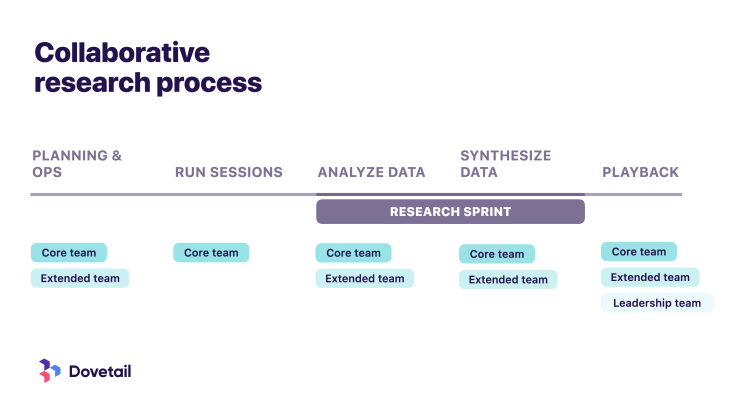

Dividing stakeholders into groups

The first thing I do is split my stakeholders into three groups – the core team, extended team, and leadership team. The core team are primary stakeholders, which for me always included a product manager, and fairly often included a development lead. Then the extended team included the rest of the team who were working on the product or feature that I was studying. This was usually a group of developers. The leadership team included executives and heads-of who were involved more on an informed basis.

The 5 phases

The process that I typically used for the types of studies I was conducting – which were exploratory interviews and usability tests – involved five phases. Planning and operations, running sessions, analyzing data, synthesizing data, and then the research playback.

Phase 1: Planning and operations

In the planning and operations phase I would work closely with my core team to create a research plan and any other stimulus we needed for the study (i.e. discussion guides, prototypes, etc). The core team and I would sit together and discuss research goals, participant criteria, and create these assets together. By doing this everyone can agree and be bought into the research goals, and understand what we’re trying to get out of this research. I also hope that they feel they’ve contributed to the project and have some stake in the outcome.

Then I would recruit and schedule the sessions just as I normally would. For team visibility, I used a Google calendar that was shared with the entire team to schedule sessions, so everyone knew when the sessions were happening. If possible I would include screener answers or demographic information about the participant in the calendar invites. Sometimes that’s not possible with privacy constraints – in my team it depended on the type of study.

Phase 2: Running sessions

Then it was time to run the session. As the researcher I would always lead the session, however, the core team was required to attend all sessions. For me, this meant my product manager and engineering lead would sit in on all interviews in a round, for example. Having them attend every session meant continuity for them – they got to experience a complete round of sessions, and we’re able to start building that richer understanding of the user. As they are primary stakeholders in the project they need to invest more time in building that context for their own understanding, but it’s also helpful for researchers and designers to get their buy-in. Later on, you can use your core team for support when communicating research findings to other stakeholders such as the leadership team.

In my case, the core team were also often leaders of the team. The development lead was the manager of the other developers on the team, and obviously, the product manager is an influential role on the team. I think that if you can motivate the team leaders with customer problems, that energy filters through the rest of the team and makes for a better team direction and moral.

Phase 3: Analyze data

Each interview session would be audio and video recorded, and then I would use an automated transcribing tool to generate a transcript for each session. Then we enter this analysis phase.

As the researcher, I would define a taxonomy upfront based on the research goals. I would also include terms for general themes like pain points, use cases, and then terms for things that are interesting but might not necessarily be relevant to the current study. I would brief the core team and the extended team on how to code a transcript. Then, depending on the scope of the project and the time constraints, I would assign each member of the team transcripts to code. I want individuals in the extended team to gain experience with a larger number of participants. This meant that each transcript is coded several times – maybe up to four times. The process was – each team member had to watch the recording, clean up the transcript where necessary (it was created with an automated transcribing tool), and tag the transcript with themes. If that transcript had already been coded by someone else on the team, they would still listen to the recording and follow the transcript, however, they would review the tags that the previous person had left and added more they felt were necessary. In a way, it’s like peer-reviewing tagging.

In my team, I would try to complete the entire collaborative analysis and synthesis process in a time box period, depending on the scope of the project and time constraints. This would usually be either one week or two weeks, and I call this the ‘research sprint’. It is important to move quickly through this process so it stays fresh in everyone’s minds. In my team, particularly the extended team, they do have other responsibilities and other work to do during this time, so time boxing it helps to prioritize alongside other work.

During the research sprint, at the end of every day that we were coding, we would do what I call the ‘research stand up’. In the research stand up, each person would say their top takeaways from the interview they had listened to and coded that day. The purpose of the stand up is to hear takeaways from the entire team continuously throughout the research project, rather than having a big download of information at the end during the research playback from just one person. It’s quite repetitive, but that repetition of information helps these findings from multiple data points sink in for the team.

Phase 4: Synthesize data

Once all the transcripts or notes have been coded, the next step in the research sprint is affinity mapping. I would take all the data points, usually, direct quotes, tagged with themes and we would affinity map those as a group, including the core team and the extended team. I find this process easier to do as a group. I like to include the extended team, if possible, because it ties what they’ve been doing in the coding phase nicely together, and helps them to start building that holistic picture

In the same session as the affinity mapping, I would facilitate an open discussion with the core and extended teams around what we think the top insights are. Usually, at this point, the top insights are quite obvious, so this was not usually a controversial discussion for my team. In the same discussion, I would also facilitate an open discussion around recommendations. The reason I do all of this in the same meeting is because it’s fresh in people’s minds, and I’m trying to reduce the number of meetings and context switching for the extended team.

Discussing recommendations at this point with stakeholders is really helpful. They are often the subject matter experts in the subject that you’re studying – whether it’s the product you’re improving or a feature you’re building – they can often give great insight at this point. Also, brainstorming recommendations as a group is much better than one person coming up with them on their own. Often the team can point out things that I missed or have a different perspective on something that can make the recommendations richer.

One thing to note is it is important to make sure the discussion doesn’t go too much into design, ideating, or solutionizing – which for my team was often difficult because we love to discuss solutions. This discussion usually takes some pretty strong facilitation muscle – just be prepared for that.

As the researcher, I would take everything that the team contributed to the recommendations and insights discussion, and put together the final insights and recommendations for the research playback presentation. It’s important to take the input from the team, but as the person who has experience interpreting this kind of data, it’s a good idea to take the lead.

Phase 5: Playback

Finally, I’ll put together the research playback presentation. At this point, the core team and the extended team should be pretty aware of what the top insights are and the general idea of the recommendations. They also have a lot of context on the project. That is everything that I would usually include in a research playback presentation and report, so it does sometimes feel like an unnecessary step, however, I do think it’s important to follow through on this last part of the process.

For one, this is where I will include my leadership team. In the team that I was working with the leadership team was fairly hands-off, it was very much a bottom-up model. So this was enough involvement for my leadership team. For the extended and core teams, the playback is a good way to reflect and wrap up the project. Again, the repetition of information ensures that those insights stick with those people.

The playback assets – whether that is a presentation or a report – are important assets for your research repository. If you’re interested in learning more about research repositories, check out this blog recapping a talk Dovetail CEO, Benjamin, gave at UX Australia recently.

A few other things to consider

That’s a walkthrough of the process I’ve been experimenting with to include stakeholders in research. I change it up and tweak it each time depending on the culture of the team, the time constraints of the project, the topic we’re studying.

Something to take into consideration is that this process does take up a lot of the team’s time and, especially the extended team, do have other responsibilities and work they need to do. I’ve had to compromise with getting the team’s time in the past. For example, for discovery projects where we’re conducting exploratory interviews that might influence the work we do over the next quarter or beyond, it was easier for me to get a week or two of the team’s time. However, for something like usability testing a feature, it didn’t make sense to pull the team away from their work. I was able to include the two developers who were focusing on improving the feature that we were testing. I’ve never had a problem getting the core team’s involvement, as they are primary stakeholders it’s important for them to prioritize research.

Lastly, I know not all leadership teams are as hands-off as the team I was working with. One thing I’ve done to include the leadership team more is invite them to attend the research stand-ups. In doing this they get to hear takeaways from sessions repeatably from the entire team, which can help them feel involved and the repetition of information can help drive home findings later on.

That’s a wrap

I know every team, company, and research process is slightly different, however, I hope you’re able to take something away from this process and adapt it to your own team. I would love to hear what others are doing to include stakeholders and team members in the research process. Feel free to join our Slack community and we can continue the discussion!

Users report unexpectedly high data usage, especially during streaming sessions.

09:46AM24 Sep, 2024

Users find it hard to navigate from the home page to relevant playlists in the app.

11:32AM9 Mar, 2024

It would be great to have a sleep timer feature, especially for bedtime listening.

15:03PM13 May, 2024

I need better filters to find the songs or artists I’m looking for.

4:46PM15 Feb, 2024Log in or sign up

Get started for free

or

By clicking “Continue with Google / Email” you agree to our User Terms of Service and Privacy Policy